He isn’t allowed to interfere. But when he found the tiny animal, alone and freezing, the rule book went out the window.

Dr. Aris Thorne is a man who loves the cold. For six months a year, he lives at a remote Arctic research station, studying climate change’s effect on the tundra. His life is governed by data, and by the primary rule of wildlife biology: observe, but never, ever interfere.

He was on his way back from checking a weather sensor, the snow crunching under his boots, when he saw a small flicker of white against the ice. It wasn’t a snowdrift. It was a tiny Arctic fox, curled into a tight ball, its body shivering violently.

On a Facebook page ostensibly dedicated to fans of David Attenborough, a heartwarming vignette recently got posted. It told the story of a man named Dr. Aris Thorne, a wildlife biologist stationed at a remote Arctic research post. In the story, Thorne wrestles with the prime directive of his profession, non-interference – before ultimately succumbing to his humanity and saving a freezing, starving Arctic fox. “To hell with the rules,” he mutters, in a line of dialogue that feels ripped from a mid-tier network drama. The post garnered dozens of likes, with commenters praising Thorne’s compassion. “I think he did the right thing,” wrote one user.

But Dr. Aris Thorne did not save a fox. He did not brave the Arctic winds. In fact, Dr. Aris Thorne does not exist at all.

If you were to search for him, however, you might be forgiven for thinking otherwise. A cursory browse of Amazon’s Kindle store reveals a Dr. Aris Thorne who is the author of Money Matters: Financial Basics for Teenagers and College Students and the protagonist of self-published thrillers like Transdimensional Invasion: Fractured Mind. He is also an expert on ADHD, reclamation of your body from VR (apparently) and, most of all, on how Introverted Women in STEM can Navigate Corporate Politics, Amplify Their Impact, and Build the Career They Deserve. (yay!) On podcast directories, he is listed as a guest expert on true crime, the tension between ambition and self, and realizing that one of the prime numbers was, in fact, false. Through the looking glass’ depths of randomly generated social media content such as “storytime” channels and AI-generated blogs, he died and destroyed Earth with him, was captured by nebulous agents for finding a warp in spacetime (r/conspiracy), and suffered several worse fates.

Dr. Aris Thorne is perhaps the most accomplished polymath of the twenty-first century. He is also a man who does not exist. Thorne is not a pseudonym for a human author, nor is he a public domain character like Sherlock Holmes. He is, in fact, what we can call a “promptonym”: a recurring, emergent artifact of Large Language Models (LLMs). When asked to generate a name for a scientist, a hero, or an expert, the world’s most advanced artificial intelligence systems, from Google’s Gemini to OpenAI’s GPT series have collectively decided that the answer is “Aris Thorne.” (or variations thereof.)

The ubiquity of this phantom figure has begun to unsettle some enthusiasts long ago. On online forums like r/OpenAI and r/LocalLLaMA, users have begun trading stories of their encounters with the doctor. “Why is Dr. Aris Thorne everywhere?” asked one user, noting that regardless of whether they asked for a marine biologist or a doctor, the AI insisted on the name. Another user, experimenting with Google’s lightweight Gemma model, complained that the software “keeps renaming my characters to Dr. Aris Thorne,” lamenting that the model was perilous for creative writing because of its obsession with this single, specific identity.

Thorne is not alone in the pantheon of questionably often mentioned names. As the technology writer Max Read noted in his newsletter recently, a similar figure named “Elara Voss” has colonized the generated literature of the web in a similar menner, appearing in dozens of nonsensical books and sci-fi summaries. But while Voss tends to occupy the role of the visionary physicist or the “chosen one” in fantasy settings, Thorne has carved out a niche as the professional, the man of science who is a background expert in many, many different topics.

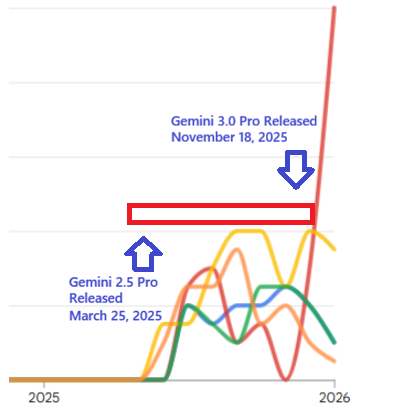

Data from Google Trends certainly show that Thorne, Voss, and many names like them have not been industry standards before sometime in 2024. The following graph includes other commonly accused names like Marcus Thorne, Elara Vance, and others.

Graph detailing the rise of select AI-generated names. Blue- Aris Thorne, Green – Elara Vance, Orange – Alistair Finch, Yellow – Marcus Thorne, Red – Elara Voss.

Furthermore, another grouping of commonly mentioned names have existed, per se, in Google Trends before going viral in the exact same time period, raising suspicion regarding whether they too, are tied to some AI-generated stereotyped names. David Chen, a name common enough that people can conceivably pass it off as real unlike Aris Thorne, is a chief example.

A very suspicious looking jump. Red – David Chen, Blue – Aris Thorne.

The truth, despite the claims of the genius of Dr. Aris Thorne, is in fact much more mundane, and quite revealing about the limitations of generative AI. The existence of Dr. Aris Thorne is not a sign of a burgeoning machine consciousness, but a testament to the repetitive nature of the data we, or more precisely the model makers, fed it.

Guillaume Laforge, a developer and AI researcher, recently conducted an investigation into the “Sci-Fi Naming Problem.” After noticing that his own experiments with story generation were yielding a suspiciously high number of protagonists named Thorne, Anya, and Elena, Laforge hypothesized that the models weren’t hallucinating new ideas so much as regurgitating old ones. He turned his attention to Kaggle, a popular platform for data scientists, and specifically to a few widely used datasets of science-fiction book descriptions and texts.

What Laforge found was probably the smoking gun of Thorne’s existence. In a dataset of book descriptions used to train early models, a character named “Dr. Thorne” appeared 204 times across 26 different descriptions. Other names, like “Anya” and “Elena,” appeared with similar frequency. These datasets, often viewed as “low-hanging fruit” by researchers, were likely used to fine-tune the first generations of creative writing AIs.

The mechanism at play thus appears to be a statistical trap. When an LLM is asked to write a sci-fi story, it looks for the most statistically probable sequence of words associated with that genre. Because “Dr. Thorne” is disproportionately represented in the specific, niche datasets the models were trained on, the AI perceives the name not as quirk in the training data – instead it decides that “Dr. Thorne” is that something that MUST appear, even if the user specifically demands not to, as it is “correct” (according to the training dataset!). The model has learned the training data too well, mistaking a quirk of the sample size for a universal rule of how every single stories must be told.

This problem is compounded by what researchers call “error propagation.” As one Reddit user theorized, the first generation of LLMs was trained on these small, repetitive libraries. Subsequent models were then trained on the synthetic data produced by their predecessors. If GPT-4 writes a million stories about Dr. Aris Thorne, and GPT-5 is trained on the internet that GPT-4 helped populate in the “synthetic data” corpus, obviously the bias is reinforced. The feedback loop calcifies the error. Aris Thorne becomes a self-fulfilling prophecy. One that is engineered by an ever growing pile of AI generated stories, at that. Specifically, the rise of Aris Thorne appears to be correlated with the launch of Gemini 2.5 Pro, which launched in March 2025 and was later superseded by Gemini 3.0 Pro last November.

Coincidentally, or not so much coincidentally, Thorne&Co. with the exception of Elara Voss plummeted in Google Search Trends that month. Perhaps Elara Voss is the new darling child of the recent crop of Large Language Models, but sadly true verification is something that even the developers themselves see themselves as far away from.

There is a profound irony in the biography of Dr. Aris Thorne. In the stories AI models write about him, he is almost always defined by his humanity. His willingness, as in the Arctic fox story, to “throw the rule book out the window”, either leads to good, or as in this lurid tale titled ‘Time is Money’, “uses a temporal displacement unit to travel back to October 23rd, 2042, to prevent the death of his fiancée, Elara.” He is the unpredictable element in a sterile system. In reality, he is the exact opposite. Thorne is perhaps the ultimate conformist, the inevitable result of a system that cannot actually imagine up names the way we humans still can, and do, today.

We often (We, as in this age’s software pioneers) imagine that AI will unlock a new era of limitless creativity, producing variations of art and narrative that humans could never conceive. But the tale of Dr. Aris Thorne, the gentleman Alistair Finch, the hero Elara Voss, and most likely a long, long list to come shows that they have indeed a quite narrow view of the state of the world and fiction, instead of expanding it. All alternative names have literally been weeded out except this single, optimal, statistically probable protagonist named [insert name here, Altman. Or Pichai.]

For now, Dr. Thorne continues his work. Today he is saving a fox in the Arctic; tomorrow he will be analyzing shark acoustics or commanding a starship. He is the hardest working man in fiction, a hero for an age of automated content, tirelessly acting out the same drama of discovery and redemption, towards whatever future this space has in store. Onwards!

Leave a Reply